Businesses rushed into AI. Then came the panic.

In 2026, more companies are waking up to a hard truth: sending internal data to public AI tools is a real risk. Sensitive documents, client details and proprietary ideas can pass through systems you don’t control. For many enterprises, that’s no longer acceptable.

This is where Sovereign AI infrastructure changes the game.

Instead of relying on external APIs, you run your own AI inside your own environment. No third-party access. No hidden data exposure. Just full control over how your data is processed, stored and used.

In this guide, you’ll learn how to build a secure Private LLM hosting architecture from the ground up. We’ll walk through what you actually need—servers, GPUs, models—and how to deploy them safely behind your own network.

This is not theory. It’s a practical Enterprise self-hosted LLM guide designed for real businesses that care about security, compliance, and performance.

By the end, you’ll understand how to:

- Keep your AI data completely private

- Run powerful language models on your own infrastrGDPR-style regulationsucture

- Avoid the risks of public AI platforms

If you want the benefits of AI without giving up control of your data, this is where you start.

Why Public AI APIs Are a Serious Data Risk in 2026?

Using public AI tools might feel fast and convenient—but for businesses, it comes with hidden risks that are getting harder to ignore.

Every time your team uses a public AI API, your data doesn’t stay inside your company. It gets sent to third-party servers. That can include:

- Internal documents

- Customer data

- Financial information

- Business strategies

Even if providers claim not to store or misuse data, the reality is simple: you don’t fully control what happens once it leaves your environment.

The Real Problem: You Don’t Own the Pipeline

Most standard cloud AI setups are built for scale, not privacy. Your prompts and outputs may pass through shared infrastructure, logging systems, or external processing layers.

This creates multiple risks:

- Data exposure through logs or debugging systems

- Unauthorized access in shared environments

- Lack of visibility into how data is handled

For enterprises, this is a direct conflict with compliance and security policies.

2026 Regulations Are Making This Worse :

Data protection laws have become stricter worldwide. Regulations around data residency and privacy now require businesses to:

- Keep sensitive data within specific geographic regions

- Maintain full control over data processing

- Provide audit trails and transparency

Public AI APIs often cannot guarantee these requirements.

This means companies using standard AI tools may unknowingly violate:

Why Standard Cloud AI Is No Longer Enough :

Traditional cloud setups were never designed for high-risk AI workloads. They prioritize convenience over control.

That’s why more businesses are moving toward Sovereign AI infrastructure—where everything runs inside their own trusted environment.

Instead of sending data out, you bring AI in.

This shift is driving the need for a secure Private LLM hosting architecture, where:

- Data never leaves your servers

- Access is fully controlled

- Compliance is built into the system

If your business handles sensitive data, relying on public AI is no longer just a technical decision—it’s a risk decision.

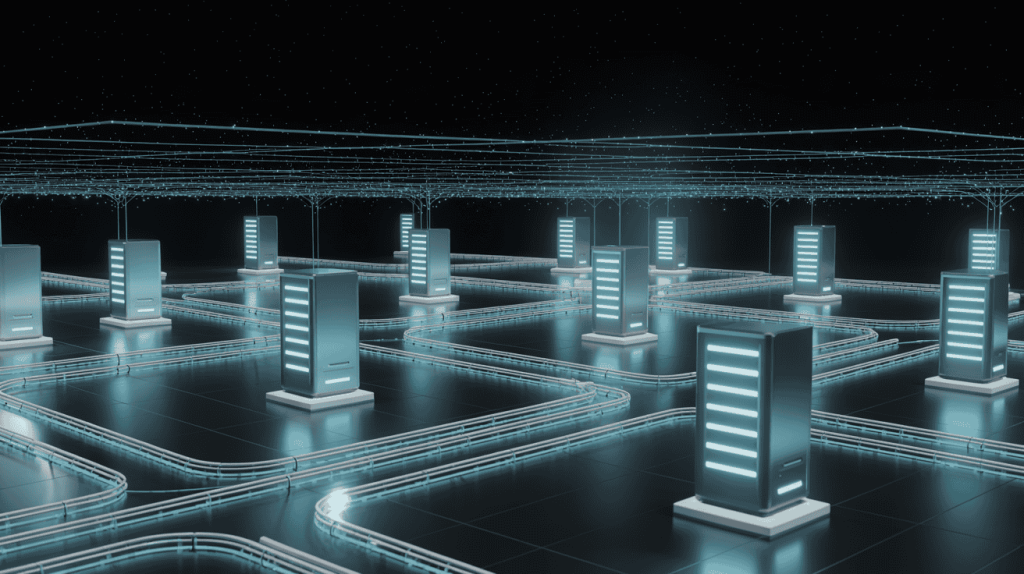

What is Sovereign AI Infrastructure?

Sovereign AI infrastructure is a simple idea: you keep full control over your AI.

Instead of sending your data to external platforms, you run AI models on your own servers inside your own environment. That means your data, your models and your systems all stay within your control.

No third-party processing. No unknown data handling.

Public AI vs Private AI: What’s the Difference?

To understand this better, here’s how the two approaches compare:

Public AI (OpenAI-style APIs)

- Your data is sent to external servers

- Processing happens outside your control

- Limited visibility into how data is stored or used

Private / Self-Hosted AI

- Your data stays inside your infrastructure

- Models run on your own servers

- Full control over access, storage, and security

This shift is exactly why more companies are moving away from public AI tools.

The foundation of enterprise AI in the coming years is sovereignty. You cannot allow your company’s proprietary intelligence and institutional knowledge to be exported and learned by third-party public models.

> — Industry Consensus on Sovereign Cloud Architecture, 2026

The Core Idea Behind Sovereign AI :

At its core, Sovereign AI infrastructure means full ownership.

You control:

- The data your AI uses

- The models running your applications

- The servers and networks powering everything

Nothing leaves your environment unless you allow it.

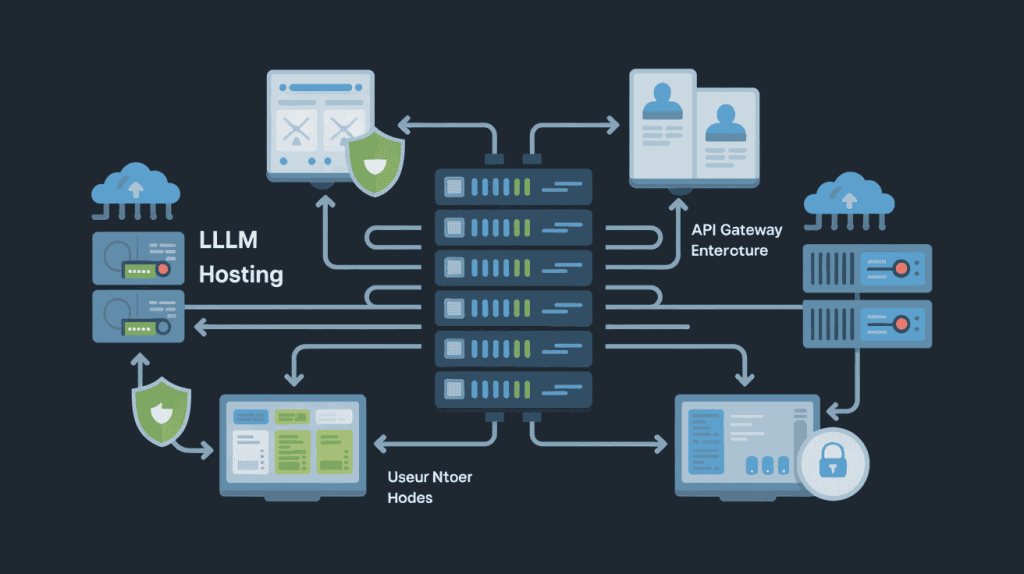

Private LLM Hosting Architecture Explained :

A Private LLM hosting architecture may sound technical but the concept is simple.

It’s the setup that lets you run an AI model inside your own environment without sending any data to external providers. Everything—processing, storage and access—happens within your control.

What It Includes :

A typical Private LLM hosting architecture is made up of four core parts:

- Model :

- The AI itself (like Llama or Mistral) that understands input and generates responses

- Compute :

- GPU servers that provide the power needed to run the model efficiently

- Storage :

- Secure space where your data, documents, and embeddings are stored

- API Layer :

- The internal access point that connects your apps, tools, or teams to the AI

How It Works (Simple Flow) :

Here’s how everything connects in a real setup:

- A user or internal system sends a request

- The request goes through your private API

- The model runs on your GPU server

- It processes data stored inside your system

- The response is returned to the user

All of this happens within your infrastructure. Nothing is sent outside.

Hardware Requirements for Private LLM Hosting :

Running your own AI isn’t about having the biggest server—it’s about having the right hardware especially when it comes to GPUs.

If you’re building a Private LLM hosting architecture, your setup needs to balance performance, cost and scalability.

GPU Requirements (The Most Important Part) :

The GPU is the core of your AI system. It handles the actual model processing and your performance depends heavily on it.

- Entry-level setup :

- Suitable for small teams or testing environments

Typically uses mid-range GPUs with smaller models or quantized versions

- Suitable for small teams or testing environments

- Enterprise-level setup :

- Designed for production workloads and multiple users

Requires high-performance GPU Servers (like A100, H100 or similar) for faster inference and larger models

- Designed for production workloads and multiple users

If you get the GPU wrong, everything else will feel slow.

CPU, RAM and Storage Basics :

While GPUs do the heavy lifting, the rest of your hardware still matters:

- CPU :

- Handles background tasks, API requests, and system operations

- RAM :

- Needed to support model loading and smooth data handling (higher is better for stability)

- Storage :

- Fast NVMe storage is essential for quick data access and loading large models

Dedicated Server vs VPS vs Bare Metal :

Choosing the right environment is just as important as the hardware itself:

- Cloud VPS :

- Good for testing, but often limited in GPU power and performance consistency

- Dedicated GPU servers :

- A strong balance between cost and performance for most businesses

- Bare metal infrastructure :

- Best for full control, maximum performance, and strict compliance environments

Many businesses today prefer privacy-focused hosting providers that offer high-bandwidth, GPU-ready servers with minimal restrictions—giving you both performance and control without relying on traditional cloud platforms.

Self-Hosted LLM : Step-by-Step Setup for Secure AI Deployment

Setting up your own AI system may sound complex but when broken down into clear steps, it becomes manageable for any business with the right approach.

This Enterprise self-hosted LLM guide walks you through the exact process to build a secure and scalable AI setup inside your own infrastructure.

Step 1: Choose the Right Model :

Start by selecting an LLM that fits your use case.

Choose based on your needs: speed, accuracy and hardware capacity.

The gap between public closed-source models and private open-source models has officially closed. Enterprises no longer have to sacrifice performance to maintain data privacy.

Step 2: Deploy GPU Infrastructure :

Next, you need the right hardware to run your model.

You can:

- Rent GPU servers

- Use dedicated infrastructure

- Deploy on private cloud or bare metal

For businesses looking for reliable performance and privacy-focused hosting, providers like Owrbit are widely preferred for GPU servers due to their strong network, high-performance hardware and flexible deployment options.

Step 3: Install a Serving Framework :

Now you need software to run and manage your model.

Popular options include:

These tools allow your model to accept requests and generate responses efficiently.

Step 4: Secure Your Environment :

Security is critical in any Private LLM hosting architecture.

Make sure to:

- Set up firewalls

- Restrict access with private networks

- Use authentication and access controls

This ensures your AI system is only accessible to authorized users.

Step 5: Connect Internal Tools :

Integrate your AI with your existing systems:

- CRM platforms

- ERP systems

- Internal documents and knowledge bases

This is where AI becomes truly useful—by working directly with your business data.

Security Best Practices for Sovereign AI Deployments :

When you build a Sovereign AI infrastructure, security is not an add-on—it’s the entire reason you’re doing it.

Here are the essential security practices every business must implement when deploying a Private LLM hosting architecture:

- Air-gapped or private networks :

- Keep your AI systems isolated from the public internet whenever possible. Use private networks or restricted access environments to ensure no external exposure.

- Encryption (data at rest and in transit) :

- Encrypt everything.

- Data at rest → protects stored files and models

- Data in transit → secures communication between systems

- This ensures your data stays protected even if accessed improperly.

- Encrypt everything.

- Access control (RBAC) :

- Use role-based access control to limit who can access what.

- Not everyone in your organization should have full access to your AI system.

- Logging and auditing :

- Track every action inside your system.

Logs help you:- Detect unusual activity

- Maintain compliance

- Investigate incidents quickly

- Track every action inside your system.

- Backup strategies

- Always have secure backups of your models, data, and configurations.

- In case of failure or attack, you can restore operations without losing critical information.

- Network monitoring and alerts :

- Continuously monitor your infrastructure for suspicious behavior.

- Set up alerts to respond quickly to potential threats.

- Regular security testing :

- Perform routine checks, vulnerability scans, and internal testing to identify weak points before attackers do.

Real-World Case Studies: How Businesses Are Using Sovereign AI

Understanding theory is helpful but real results build trust. Here are two simple examples of how companies are using Sovereign AI infrastructure to solve real business problems.

How a Healthcare Provider Protected Patient Data with Private AI :

A mid-sized healthcare company wanted to use AI to summarize patient records and assist doctors with faster decision-making. However, strict compliance rules (like HIPAA) made public AI tools too risky.

Instead of using external APIs, they built a Private LLM hosting architecture inside their own secure environment.

What they did:

- Deployed AI models on dedicated GPU servers within their private network

- Ensured all patient data stayed inside their infrastructure

- Restricted access using role-based permissions

- Enabled full logging for audits and compliance checks

The result:

- 100% control over sensitive patient data

- Full compliance with healthcare regulations

- Faster internal workflows without security risks

This allowed them to safely adopt AI without exposing confidential medical information.

How a Financial Firm Reduced Costs by 40% with Self-Hosted AI :

A financial services company was heavily relying on public AI APIs for data analysis, reporting and client insights. Over time, their costs kept increasing due to per-request pricing.

They decided to switch to an Enterprise self-hosted LLM guide approach by building their own Sovereign AI infrastructure.

What they did:

- Moved from API-based AI to self-hosted models

- Invested in dedicated GPU servers for consistent performance

- Integrated AI directly into internal financial systems

- Optimized workloads to handle high-volume requests efficiently

The result:

- Reduced AI operational costs by nearly 40%

- Eliminated dependency on third-party APIs

- Gained full control over sensitive financial data

More importantly, they turned AI from an ongoing expense into a long-term investment.

These examples show a clear trend: businesses are no longer just experimenting with AI—they are bringing it fully in-house to gain control, reduce risk, and improve efficiency.

Frequently Asked Questions (FAQs) :

Got questions about Sovereign AI, private LLMs, or hosting your own AI securely? Here are clear, practical answers to the most common questions businesses are asking in 2026.

Is it safer to use a private LLM instead of public AI tools?

Yes. A private LLM setup is significantly safer because:

- Your data is not sent to third-party servers

- You control storage, access, and processing

- There is no risk of external logging or data sharing

This is why businesses are moving toward Private LLM hosting architecture for sensitive workloads.

How much does it cost to host your own LLM?

Costs depend on your scale and hardware:

- Small setups → lower monthly cost with basic GPUs

- Mid-level deployments → moderate cost for business usage

- Enterprise setups → higher cost but better performance and scalability

Over time, self-hosted AI is often more cost-effective than paying per API request.

What hardware is required for private LLM hosting?

The most important component is the GPU. You also need:

- High-performance GPU (A100, H100, or similar)

- Sufficient RAM (32GB–256GB depending on scale)

- Fast SSD/NVMe storage

- Reliable CPU for system operations

Choosing a reliable GPU hosting provider with strong network performance can make a big difference in overall results.

Can I host an LLM without a GPU?

Technically yes, but it’s not practical for real-world business use.

Without a GPU:

- Performance will be extremely slow

- Large models may not run properly

- User experience will suffer

For production environments, GPU servers are essential.

Which models are best for self-hosted AI?

Popular models include:

- Llama (good for general use)

- Mixtral (efficient and scalable)

- Other open-source LLMs depending on your needs

The right model depends on your use case, hardware, and performance requirements.

Is self-hosted AI compliant with GDPR and other regulations?

Yes, if implemented correctly.

With Sovereign AI infrastructure, you can:

- Control where data is stored

- Ensure data stays within required regions

- Maintain full audit logs

This makes it easier to meet compliance requirements compared to public AI APIs.

How do I secure my private LLM setup?

You should implement:

- Private or isolated networks

- Encryption (data at rest and in transit)

- Role-based access control (RBAC)

- Continuous monitoring and logging

- Regular backups

Security is a core part of any Enterprise self-hosted LLM guide.

How long does it take to deploy a private LLM?

A basic setup can be done within a few hours to a couple of days, depending on:

- Infrastructure readiness

- Model size

- Security configuration

Advanced enterprise setups may take longer due to compliance and integration requirements.

Can I integrate a private LLM with my business tools?

Yes. You can connect your AI system with:

- CRM platforms

- ERP systems

- Internal databases

- Knowledge bases

This is where AI becomes highly valuable—by working directly with your internal data.

Is Sovereign AI infrastructure only for large enterprises?

No. While large companies benefit the most, even small and mid-sized businesses are now adopting private AI setups thanks to:

- Affordable GPU hosting

- Open-source models

- Flexible infrastructure options

With the right provider, entry barriers are much lower than before.

What are the main benefits of private LLM hosting?

- Full data privacy and control

- Better compliance with regulations

- Predictable long-term costs

- No dependency on external AI providers

- Customization based on business needs

What should I look for in a GPU hosting provider?

When choosing a provider, consider:

- High-performance GPUs

- Strong network connectivity

- Privacy-focused infrastructure

- Scalability options

- Reliable uptime

Many businesses prefer providers that specialize in dedicated GPU environments rather than general cloud platforms, as this ensures better control and consistent performance.

Is Sovereign AI the future of enterprise AI?

Yes. As regulations tighten and data becomes more valuable, businesses are shifting toward full control over their AI systems.

Sovereign AI infrastructure is quickly becoming the standard for organizations that prioritize security, compliance, and long-term scalability.

Why are businesses moving away from public AI APIs?

Because of increasing concerns around:

- Data leaks

- Lack of control

- Compliance risks

- Rising API costs

Private LLM hosting architecture solves these problems by keeping everything in-house.

If you’re planning to move toward a secure, self-hosted AI setup, having the right infrastructure and hosting partner can make the process faster, safer and more reliable.

Conclusion: Take Back Control of Your AI Data

In 2026, data is not just important—it’s your biggest business asset.

Every time you send sensitive information to public AI tools, you risk losing control over that asset. Whether it’s customer data, internal documents or business strategies, once it leaves your environment, you can’t fully control how it’s handled.

That’s why more businesses are moving toward Sovereign AI infrastructure.

If you don’t own the infrastructure, you don’t own the AI. And if you don’t own the AI, you don’t control your data.

By building your own Private LLM hosting architecture, you:

- Keep your data fully private

- Stay compliant with evolving regulations

- Eliminate dependency on third-party AI platforms

- Gain long-term cost and performance control

This is not just a technical upgrade. It’s a strategic decision.

The companies that take control of their AI today will be the ones that stay secure, compliant, and competitive tomorrow.

Discover more from Owrbit

Subscribe to get the latest posts sent to your email.